Components (cards, all sorts of aluminium boxes, everithing you can touch and smell) are commonly called hardware.

The software is the logical "component" of a computer. Usualy, when one thinks of software he or she thinks about either operating systems or programs to run on their hardware. Software is (booth in practice and theory) information. The unit of information is the bit (presented lower on the page).

This is a rather ackward term, for not everibody likes it. Firmware is the software that runs on specific hardware, i mean the BIOS (Basic Input-Output System) that is specific for every motherboard; The Boot ROM of network cards. Also, firmware may be thought of the verry fundamnetal software that is needed by a hardware component to run.

When dealing with computers, the most common form of current is Alternative Current (AC). This is commonly defined by Frequency. You should develop (at least) a basic understanding of AC to get the least idea of your actions implications no matter the situation.

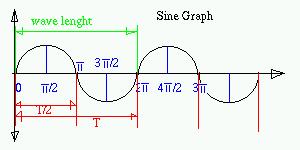

In the above poorly illustrated graph, i have presented the variation of the sine trigonometric function. It is a good model for what i am going to explain. Observe the wave length, it is the minimum distance between two points so that the continuation is similar to the start. It is measured in meeters (hope i provided a good explanation). The amount of time needed by the wave to perform a wave length (noted with "T") is called period (Clock) and it's measured in seconds. Frequency (f) is the opposite of T.

f=1/T ; 1s-1= 1Hz (Hertz) => 1Hz= 1/1s

| Useful Hz multiples | ||

|---|---|---|

| 1 kHz | 1000 Hz | 103 Hz |

| 1 MHz | 1000 kHz | 106 Hz |

| 1 GHz | 1000 MHz | 109 Hz |

| 1 THz | 1000 GHz | 1012 Hz |

Usage in common speech:

The representation of numbers in a computer is performed in the binary numbering system with the use of bits. One bit is either a "0" or a "1". So if i have one bit, take "1" for example; this equals to "2" in the decimal numbering system. To remember that any number given to the machine will be converted to bits, and the results of the computations will be converted back to decimals.

One Byte consists of 8 bits and it's the standard in measuring storage quantity. There is a catch to bytes: their multipliers are instead of powers of 10, powers of 8!

| Useful Bit & Byte Multipliers | |

|---|---|

| 1 byte | 8 bits |

| 1 Kb | 1024 b(ytes) |

| 1 Mb | 1024 Kb(ytes) |

| 1 Gb | 1024 Mb(ytes) |

| 1 Tb | 1024 Gb(ytes) |

This sums up some of the very basic notions you will need.

By now you should know:

- What Hardware and software are

- That a you use Hz to get an ideea about speed.

- That the byte is the measuring unit for software size.

<-Back written by Nagy Andrei (27apr2003) www.yioth.3x.ro Next->